Category: Analytics

-

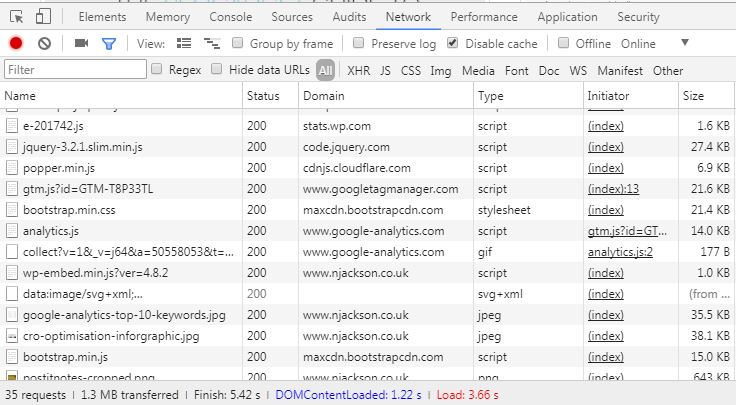

Debugging tags with your browser’s native developer tools

Your browser’s native developer tools are a great way to test third-party tags (Google Analytics, Display/Social Pixels) if you’re unable to (or don’t want to) add extensions such as Google Tag Assistant or Ghostery to your browser. All browser’s developer tools work in the same way, when visible on a page they collect debug information…

-

Fantastic guide to conversion rate optimisation by SEOGadget

This was just too awesome not to share. It’s got the good and bad way to do conversion rate optimisation spot on. It’s looking at a more broad view than page level, the path that a typical user takes to convert. A CRO infographic by SEOgadget.co.uk, read the full guide on SEOmoz

-

Forget about “Not Provided” in Analytics. Infer your keywords by looking at landing pages

I had a look in Google Analytics today at what keywords had been driving traffic to my site. What I found was that (Not Provided) was most of my traffic. I wasn’t surprised, this blog isn’t hugely popular, not updated frequently and is a part-time hobby. But I still wanted to know how people reached…

-

Website Optimisation – Identifying what to test

If you want your website to be successful, conversion rate optimisation is crucial to success. Just half a percent increase can make a huge difference in the volume of leads your site generates, and more importantly – your ROAS. The effort-reward ratio certainly means you should put aside some time to test. I’ll be referring…

-

A/B Tests: Not sure which is performing better?

Often on low traffic sites, you’ll be waiting quite a long time for a split test to show good results. And by the time you get a decent level of numbers, will the trend have changed? So it’s time to stop looking at just visitors and conversions and have a look at the other metrics…

-

Multivariate segmentation in Google analytics

So, this is the first blog post in a long while and I’m writing it on my new HTC desire! So, let’s get to the point. You are setting up a multivariant (or a/b) experiment using web optimization software, you also have Google analytics installed (though any analytics with event tracking will do). To better…

-

Tracking events with Google Analytics

So, we had some hyperlinked images on one of our websites, each leading to the same page, but with a different $_GET which identifies which button this was. As far as my knowledge goes, that would have made the page duplicate (in google robot’s eyes), which then would have a minor impact on the organic…